Aerodynamics are a key part of the performance of a vehicle, and given the cost of building and testing a whole vehicle or component, simulations are a commonly adopted approach for automotive testing to help model their behaviour.

By simulating a vehicle using computational methods such as computational fluid dynamics (CFD), engineers can mimic the physical domain and run automotive tests in the form of virtual wind tunnel experiments for a fraction of the price.

The goal of running these tests and capturing data goes beyond design configuration but also ensures adherence to industry regulations, road safety standards, various inspection certification services, emissions compliance, and so on.

In the realm of engineering, this automotive testing process allows automotive engineers to understand the performance of designs in advance, thus narrowing down the right design choices for the prototype stage.

Unfortunately, automotive testing via simulations can take several hours to run, and this type of vehicle testing (i.e, simulations) must be run for multiple design iterations and for hundreds of different operating conditions.

Furthermore, simulation results and their accuracy is not perfect. For example, tyre and rim aerodynamics are notoriously difficult to model accurately, so when a prototype is created and tested on the road, the results can often drastically differ from solutions provided by the simulation results.

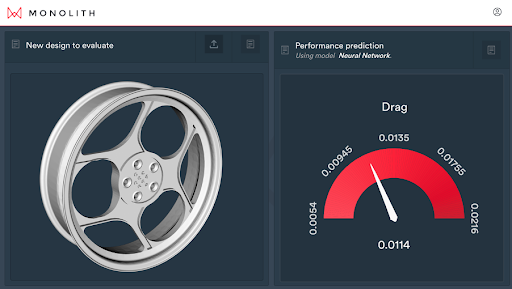

Evaluating aerodynamic rim performance inside the Monolith AI platform.

In this blog, we will discuss where simulation and CAE tools work best in automotive engineering workflows, as well as where they reach limitations, and where an AI solution can provide increased accuracy and ROI.

Automotive testing using CFD simulations & their limitations

Simulations rely on taking complicated partial differential equations (PDEs) that describe nature and its physics. CFD, for example, involves complex iterative numeric solvers, and requires efficient meshing techniques to discretise the physical domain to accurately model and solve the fluid flow and its interaction with the vehicle.

CFD simulation results for automotive testing & automotive fluids

The results can then be used to post-process important output quantities such as drag, downforce, and surface pressure of the vehicle, as well as the turbulence of the surrounding air.

Turbulent flow around a vehicle.

An engineer would typically run tens to hundreds of simulations, with different boundary conditions to match real-world behaviour such as wind speeds or wind directions, as well as incorporate different designs such as changes to body shape, rim design, and spoiler profiles of the car.

Unfortunately, equations such as Navier-Stokes do not have closed-form solutions, so engineers must rely on special modelling techniques. A trade-off then arises, as the accuracy of desired results is determined by parameters such as the number of iterations, the granularity of time steps, the mesh resolution, and much more.

However, improving these parameters also greatly increases the time it takes to run accurate simulations for reliable automotive testing.

Engineers want simulation results to be as accurate as possible, which often involves tedious tweaking of numerical solver settings and adjusting the meshing of their geometry. They also want to ensure the automotive testing simulation is robust, ideally bug-free, and converges successfully for a wide range of inputs. Verifying this adds more time to the setup, and increasing robustness often comes at the cost of accuracy.

This is where Machine Learning (ML) and building self-learning models can come in and assist the automotive industry in many ways. The whole point of CFD is to predict the performance of a design (factoring in all automotive components) before spending time and money on prototyping and testing, so that engineers can reduce the number of iterations it takes to reach their ideal design. ML offers an alternative modelling approach that delivers the same goal.

The self-learning model approach to automotive testing

By training an ML model to learn the performance and physics based on existing data, engineers can use these models to run “virtual tests” and get predictions for new designs, in new conditions, in the order of seconds as opposed to hours.

This is a huge time saver that allows quicker iteration on different designs to find a potential solution. The increased speed also opens up the possibility of using AI optimisation tools as part of generative design in order to suggest new geometries.

There are two approaches that can be taken here in terms of training. Firstly, engineers can run an initial Design of Experiment (DoE) of simulation runs, and train an ML model using that data. Alternatively, engineers can train the ML model from testing data collected, such as in a wind tunnel or on track.

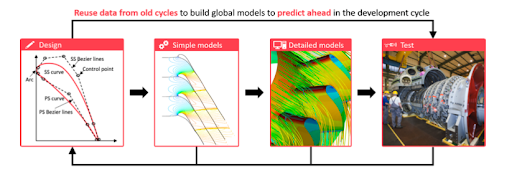

Automotive industry AI-based engineering workflow: performance prediction ahead of time.

The first approach has the advantage that it’s cheaper to collect larger volumes of data using simulations than running tests. Engineers have to still do the initial effort of running a set of simulations which takes time, but after the initial DoE, they can validate new designs using the ML model rather than running another set of simulations, which saves time in iterating.

The downside is that an ML model is only as good as the data it’s trained on, so there are still constraints due to the fact that simulation results are not always accurate, so the ML model will only mimic that level of accuracy.

Benefits of using a machine learning model for automotive testing

The advantage of the second approach of training on test data is that now the ML model is learning the true real-world physics. Unfortunately, getting large volumes of test data can be prohibitively expensive.

If engineers are able to train on test data, the benefits are massive. They can run new designs through the ML model before the next set of testing, which will allow them to rule out designs in seconds without ever running the actual physical tests, which can reduce the number of total tests you have to run by up to 70%.

.gif)

Using the Monolith AI dashboard to understand complex vehicle dynamics.

Can AI speed up your automotive testing process & simulation success?

Simulations and ML are two methods for achieving the same goal – improving the efficiency with which engineers are able to validate new designs, so that they can get to their end design in the fastest and cheapest way.

Simulations are ideal when engineers are able to accurately model the physics in a computationally light way. Areas such as suspension kinematics can often run simulations with high accuracy in the order of minutes, thus allowing lots of iterations as well as strong confidence that when the vehicle is built the performance will still be good.

When to use ML models for automotive testing

On the flip side, ML is ideal when simulations are either too inaccurate or too slow, and physical testing is expensive and time-consuming. Areas such as aerodynamics are ripe for ML trained on test data to rapidly accelerate highly iterative design processes.

Engineers can’t physically test all designs, and where CFD simulations aren’t accurate enough, training the ML model on past test data, engineers can reduce the total tests and maximise the performance of the final design.

AI & machine learning for automotive testing services

Empowering engineers to integrate ML into their workflow is a challenge in itself. Most data science tools are designed for data scientists, not engineers, and are not designed for CAD and CAE data.

It’s important when evaluating the most suitable method to remember what the original goal is. There are often multi-objective, possibly competing, goals, which we want to optimise for and try to balance – reducing time-to-market, overall R&D costs, and improving the performance of the final design.

Therefore, when assessing whether ML is a better approach than simulations or lab testing, the key is not the accuracy of the ML models, but rather whether they can augment existing methods which could include running a mix of simulations and physical tests, but in such a way that a multi-objective target is always met.

Monolith AI provides the solutions for your automotive testing problems

Monolith AI is a software platform designed for engineers, by engineers, and its low code interface allows engineers to create end-to-end ML workflows without any coding or data science knowledge.

Our software was built to work with your engineering test data from all measurements, signals or sensors including time-series, 3D CAD, and tabular data.

Automotive engineers use Monolith software to build self-learning models to quickly understand and instantly predict the performance of complex systems so they can test less, learn more, and develop better quality products faster.